2026

WHU-STree: A multi-modal benchmark dataset for street tree inventory

Ruifei Ding†, Zhe Chen†, Wen Fan, Chen Long, Huijuan Xiao, Yelu Zeng, Zhen Dong*, Bisheng Yang

ISPRS Journal of Photogrammetry and Remote Sensing (IF: 12.2) 2026

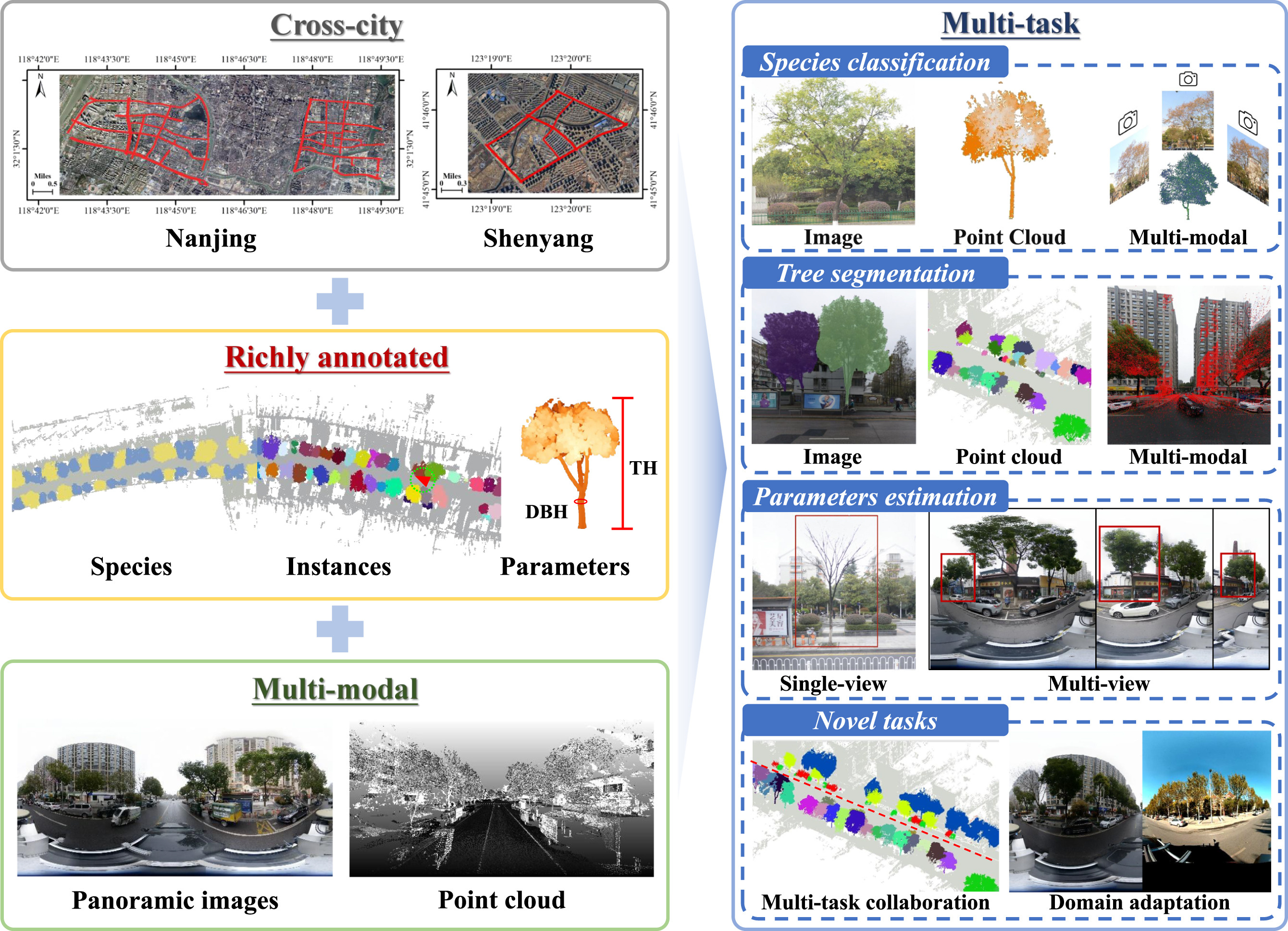

We introduce WHU-STree, a cross-city, richly annotated, and multi-modal urban street tree dataset designed for comprehensive inventory analysis, from initial individual tree segmentation, through species classification, to macro-level asset management. Besides, we implement several novel settings, making it possible to realize certain ideas that are difficult to achieve in traditional MMS datasets, such as concurrently supporting over 10 inventory tasks, integrating synchronized point clouds with high-resolution images, and benchmarking cross-domain multi-modal fusion challenges.

TSC-Net: a roadside tree structure parameter computation network using street-view images

Chen Long, Dian Chen, Zhe Chen, Ruifei Ding, Zhen Dong*, Bisheng Yang

Geo-spatial Information Science (IF: 5.5) 2026

We propose TSC-Net, an efficient end-to-end network that accurately estimates tree height and diameter from low-cost street-view images by integrating semantic and geometric cues through a decoupled dual-branch encoder and auxiliary regression tasks, significantly outperforming existing methods in both accuracy and speed.

2025

Evaluating cooling and energy-saving potential of Vertical Greenery Systems based on 3D City Models: A case study of three cities

Chengjie Li, Zhe Chen, Fuxun Liang, Zhen Dong, Bisheng Yang*

The International Archives of the Photogrammetry Remote Sensing and Spatial Information Sciences 2025

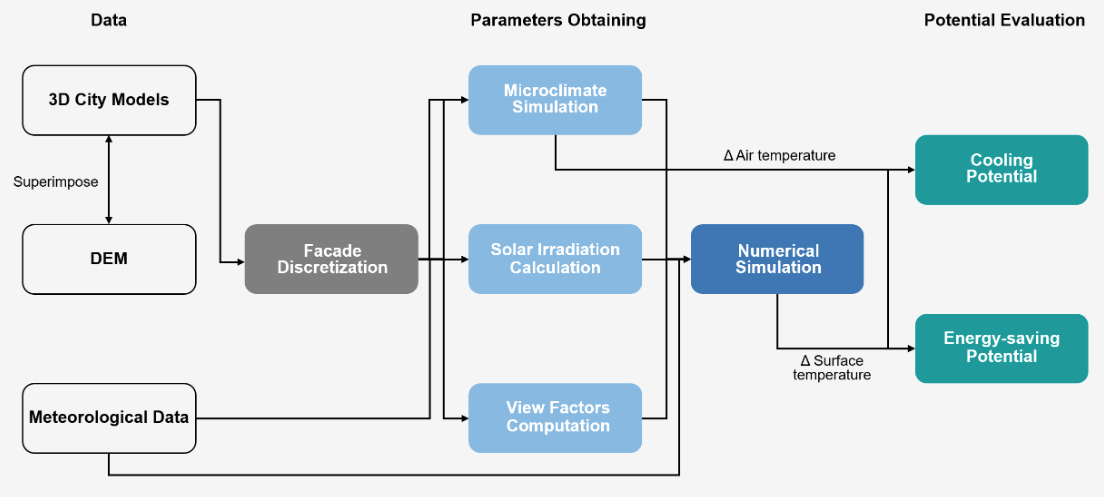

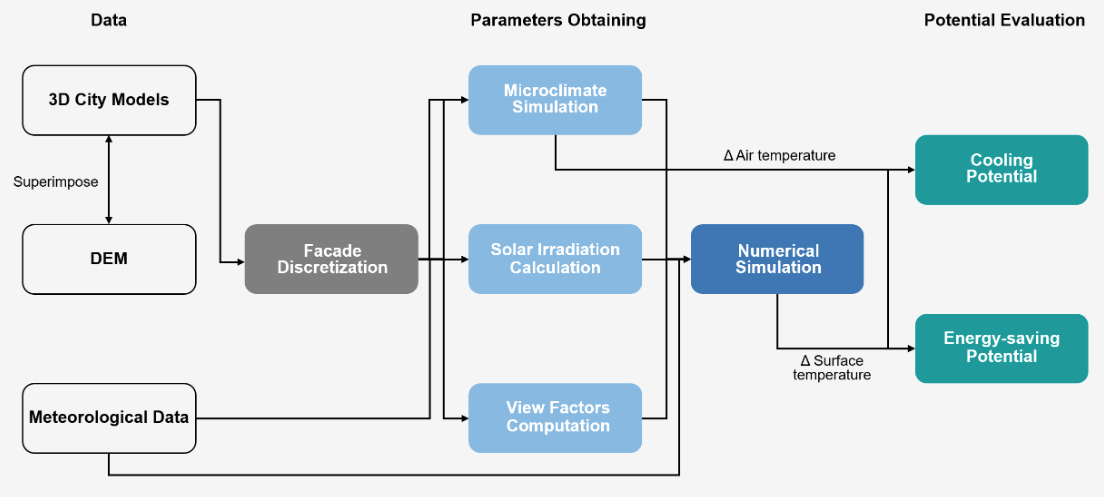

Vertical Greenery Systems (VGS) offer a promising solution to urban heat and energy demands, yet their evaluation using precise 3D city models remains limited. To address this, we developed a novel 3D simulation workflow—integrating microclimate, solar irradiation, and view factor computations—to assess the cooling and energy-saving potential of VGS in Wuhan, Hong Kong, and Singapore. Our results demonstrate that VGS performance is highly dependent on local climate and urban morphology, establishing a strong foundation for future city-scale optimizations.

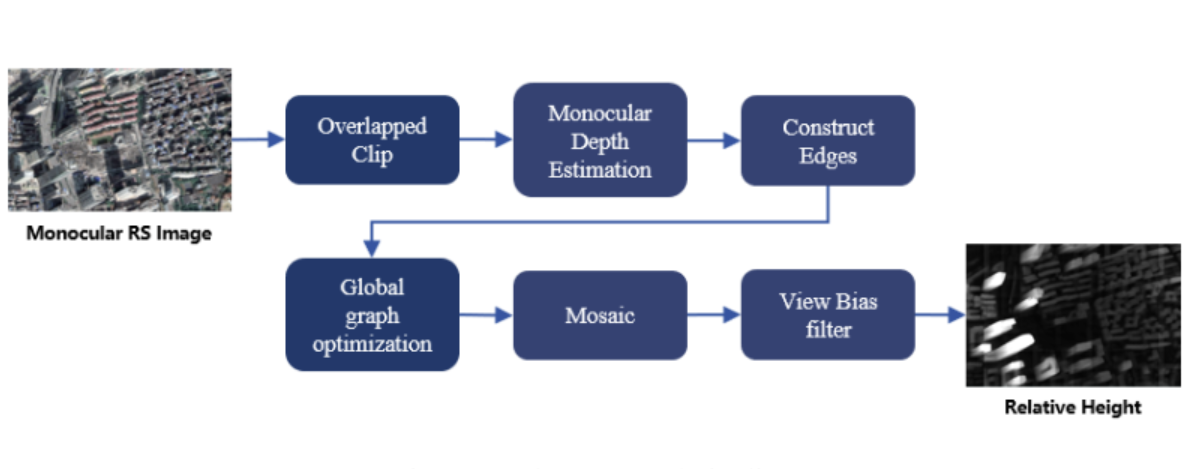

A training-free method for estimating the relative height of buildings

Zhe Chen, Chengjie Li, Fuxun Liang, Tong Peiling, Chen Long, Zhen Dong, Bisheng Yang*

The International Archives of the Photogrammetry Remote Sensing and Spatial Information Sciences 2025

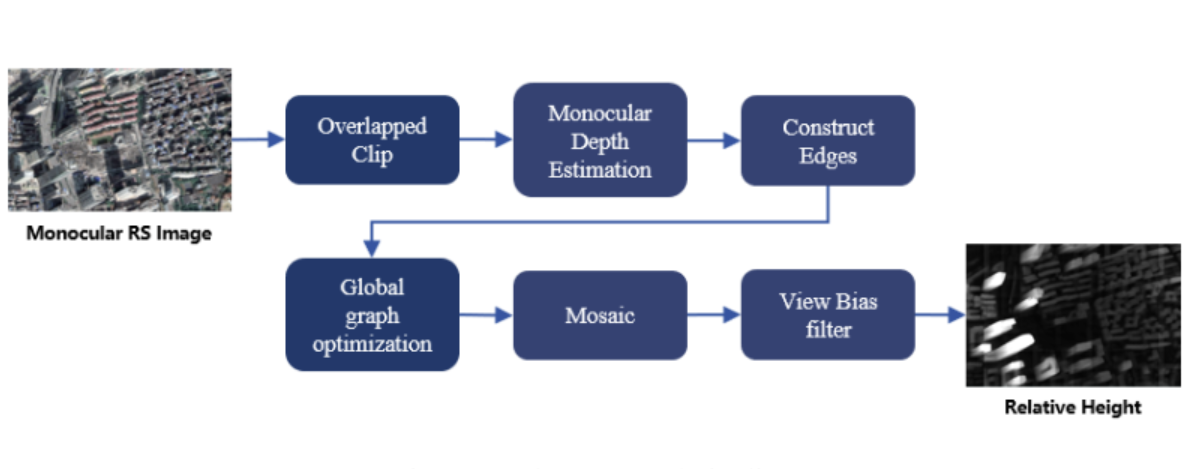

We propose a training-free approach based on a vision foundation model that achieves highly accurate, annotation-free estimation of individual building heights by extracting and structurally optimizing relative depth from high-resolution remote sensing imagery, significantly outperforming existing baselines.

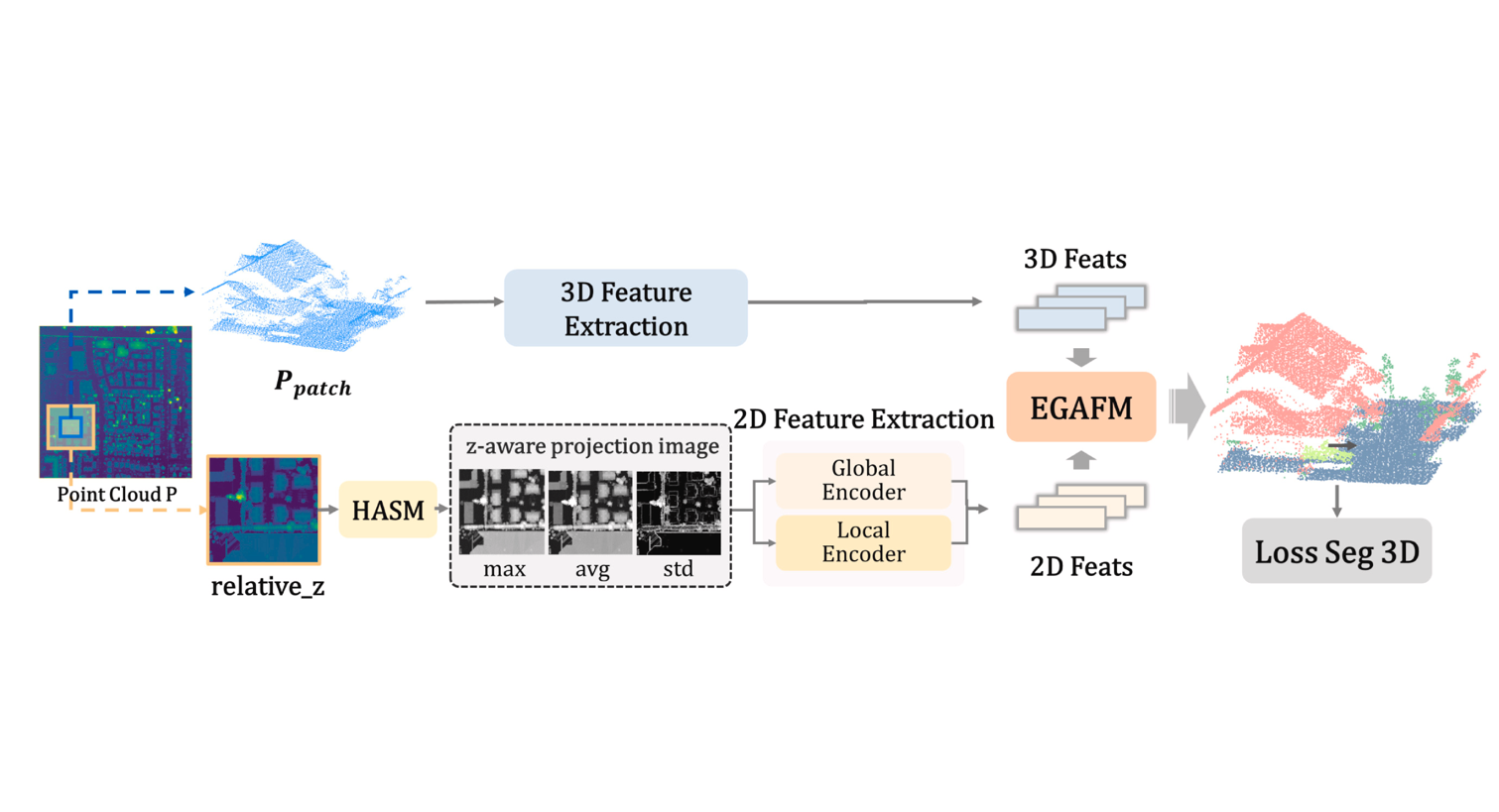

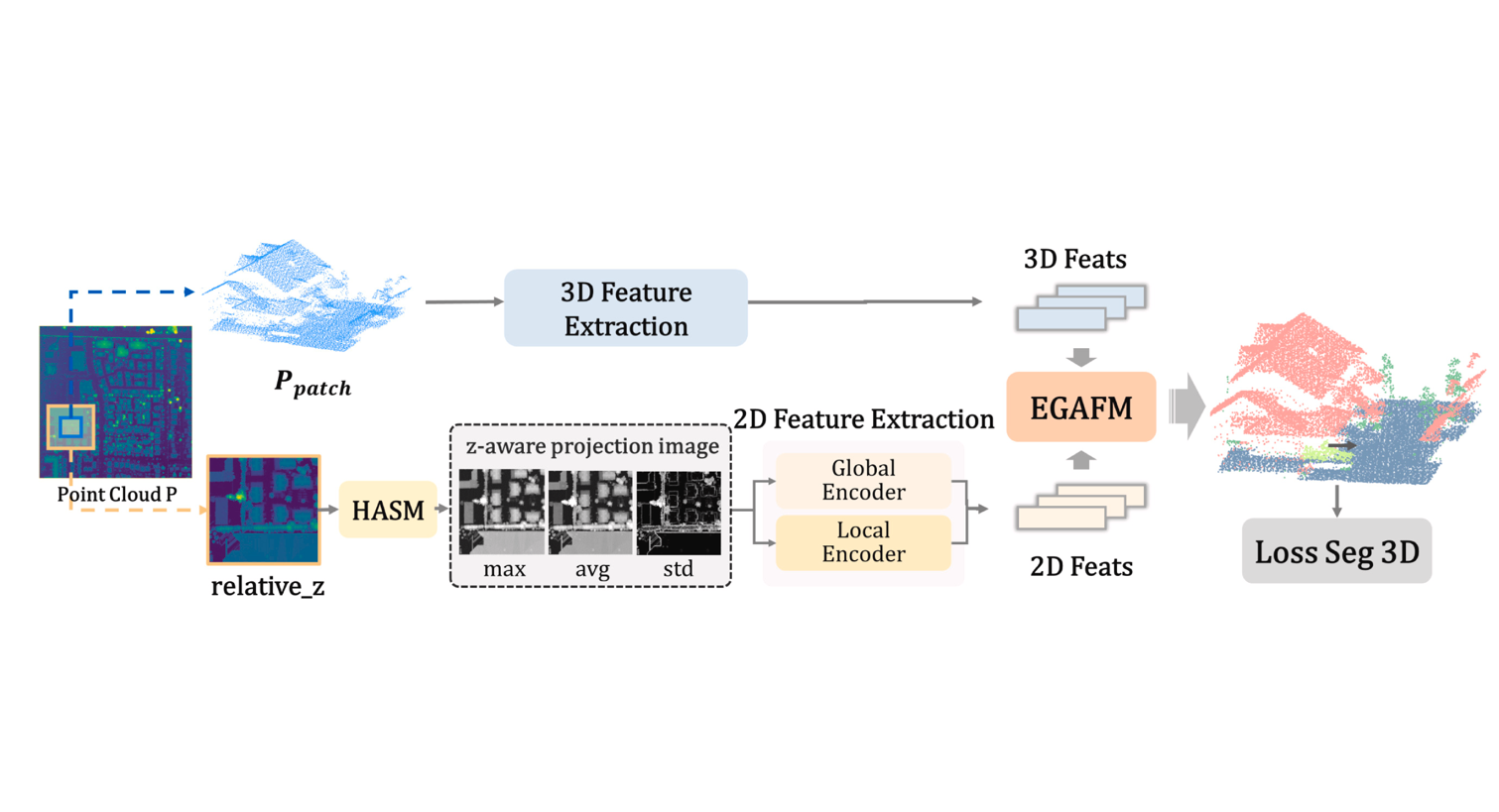

A novel projection map driven multimodal fusion framework for ALS point cloud semantic segmentation

Pangyin Li†, Zhe Chen†, Chen Long, Huazu Zhang, Yang Lv, Ronggang Huang, Zhen Dong, Bisheng Yang*

International Journal of Applied Earth Observation and Geoinformation (IF: 8.6) 2025

We introduce EGAFNet, a novel multimodal framework designed for ALS point cloud semantic segmentation, from the initial elevation-guided input representation, through dual-branch feature extraction, to adaptive 2D-3D feature fusion. Besides, we implement several novel settings, making it possible to realize certain ideas that are difficult to achieve in traditional multi-sensor fusion methods, such as utilizing single-source ALS data for multimodal perception, adaptively scaling object heights in 2D projections, and mitigating feature confusion caused by elevation occlusion.

The Neural City: A next-generation spatio-temporal intelligence paradigm for urban holistic governance

Zhen Dong†, Haiping Wang†, Zhe Chen, Chen Long, Yuning Peng, Yuan Liu, Fuxun Liang, Jian Zhou, Yiping Chen, Fan Zhang, Bisheng Yang*, Deren Li

The Innovation (IF: 25.7) 2025

We outline Neural City to enable end-to-end urban governance, seamlessly linking raw urban data to holistic urban governance, achieving "6W+4R" governance.

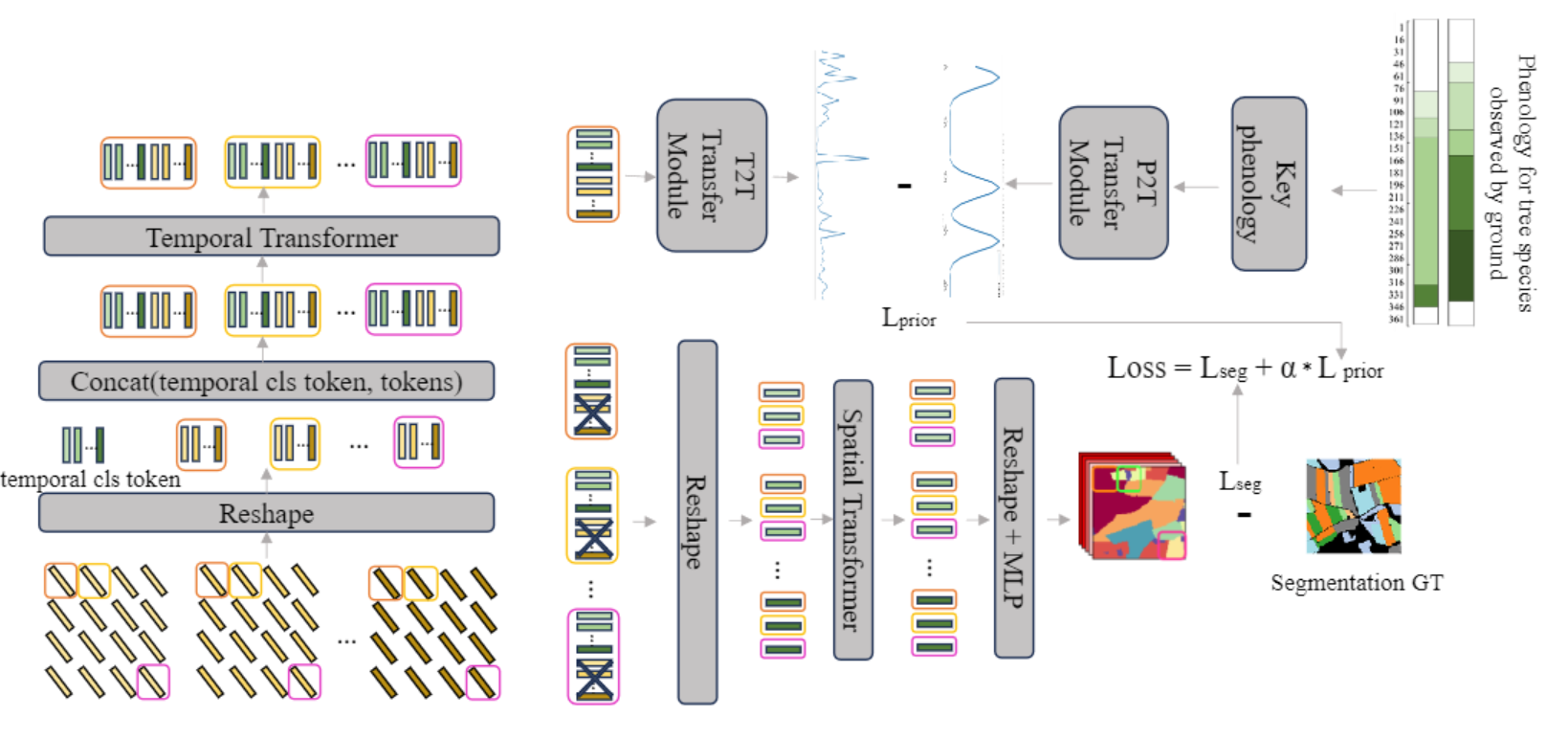

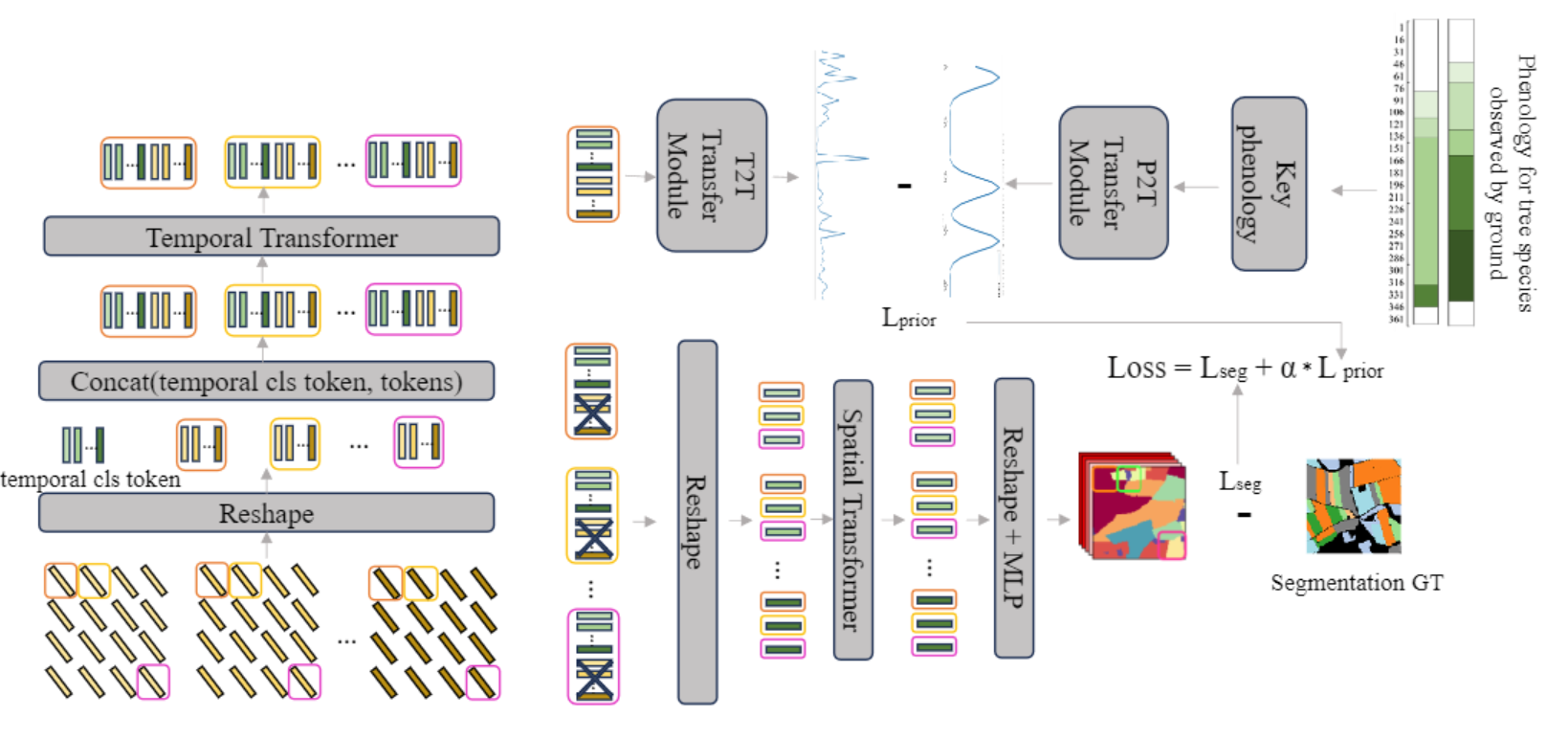

Integrating Phenological Priors with Deep Spatio-Temporal Features for tree species mapping

Zhanyu Ma, Ningning Zhu, Zhen Dong, Ruibo Chen, Bisheng Yang*, Zhe Chen, Chen Long, Ruifei Ding

ISPRS Annals of the Photogrammetry Remote Sensing and Spatial Information Sciences 2025

We introduce PTSViT, a deep learning framework for automated tree species mapping that bridges spatio-temporal feature extraction, phenological knowledge integration, and macro-level forest carbon estimation. Furthermore, we implement novel settings to overcome traditional Earth observation limitations, such as embedding geoscientific priors directly into the loss function, utilizing ground-based phenology as supervisory signals, and adapting to regional variations in species behavior.

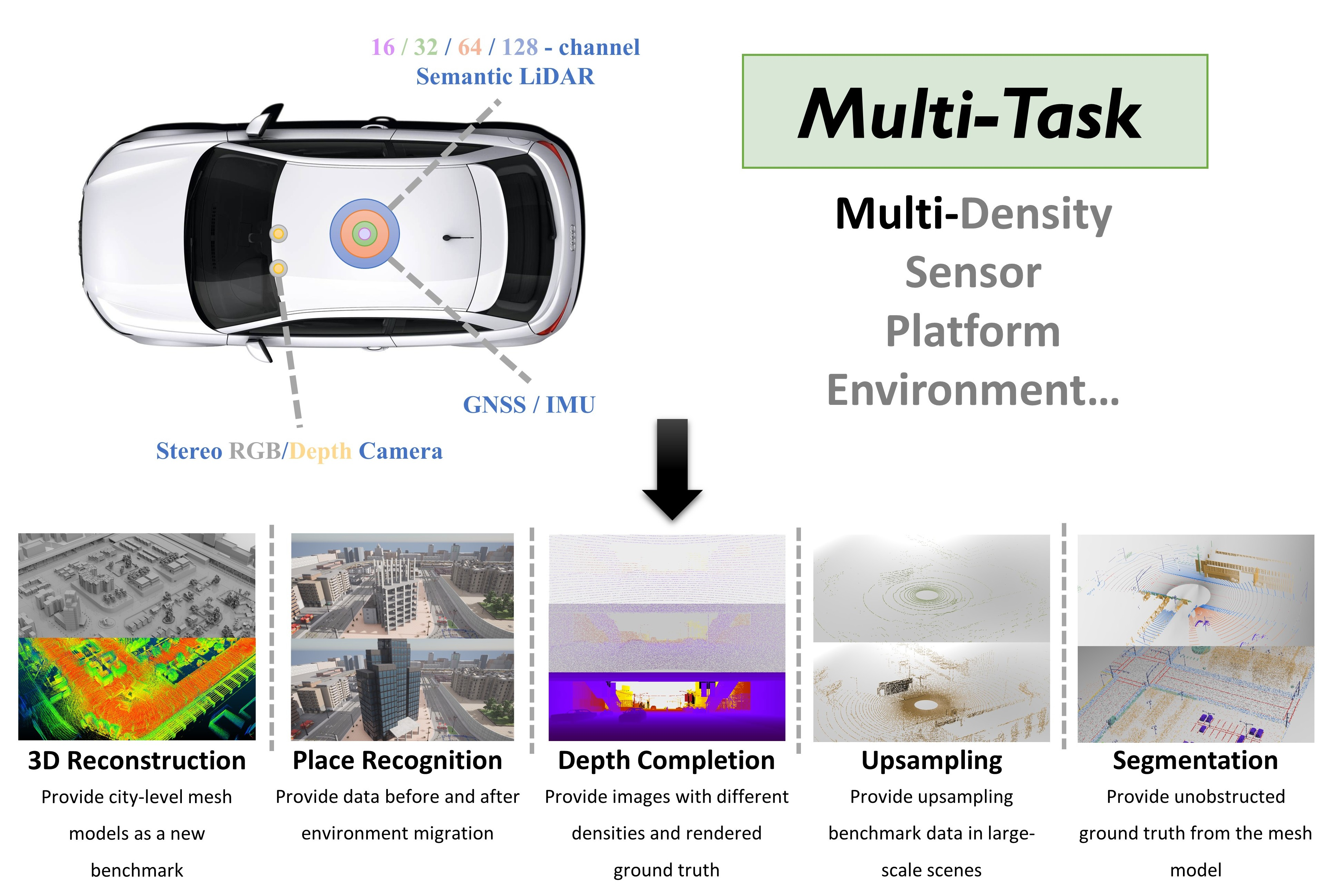

WHU-Synthetic: A Synthetic Perception Dataset for 3D Multi-task Model Research

Jiahao Zhou†, Chen Long†, Yue Xie, Jialiang Wang, Conglang Zhang, Boheng Li, Haiping Wang, Zhe Chen*, Zhen Dong

Transactions on Geoscience and Remote Sensing (IF: 8.6) 2025

We introduce WHU-Synthetic, a large-scale 3D synthetic perception dataset designed for multi-task learning, from the initial data augmentation, through scene understanding , to macro-level tasks. Besides, we implement several novel settings, making it possible to realize certain ideas that are difficult to achieve in real-world scenarios, such as sampling on city-level models, providing point clouds with different densities, and simulating temporal changes.

2024

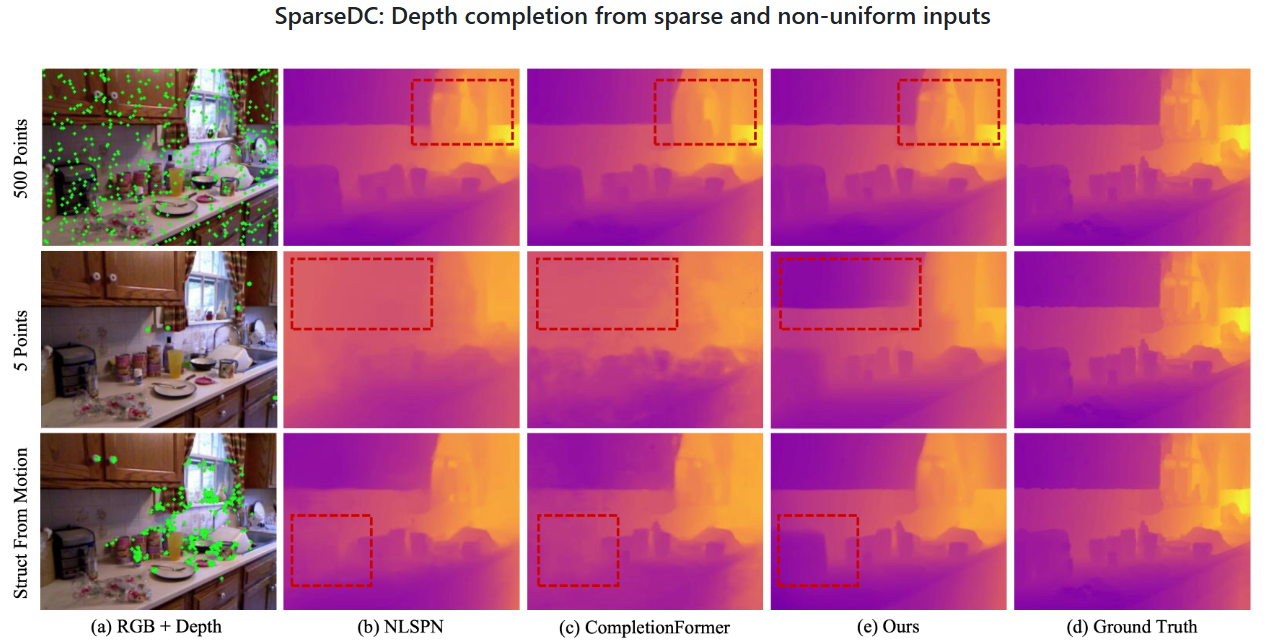

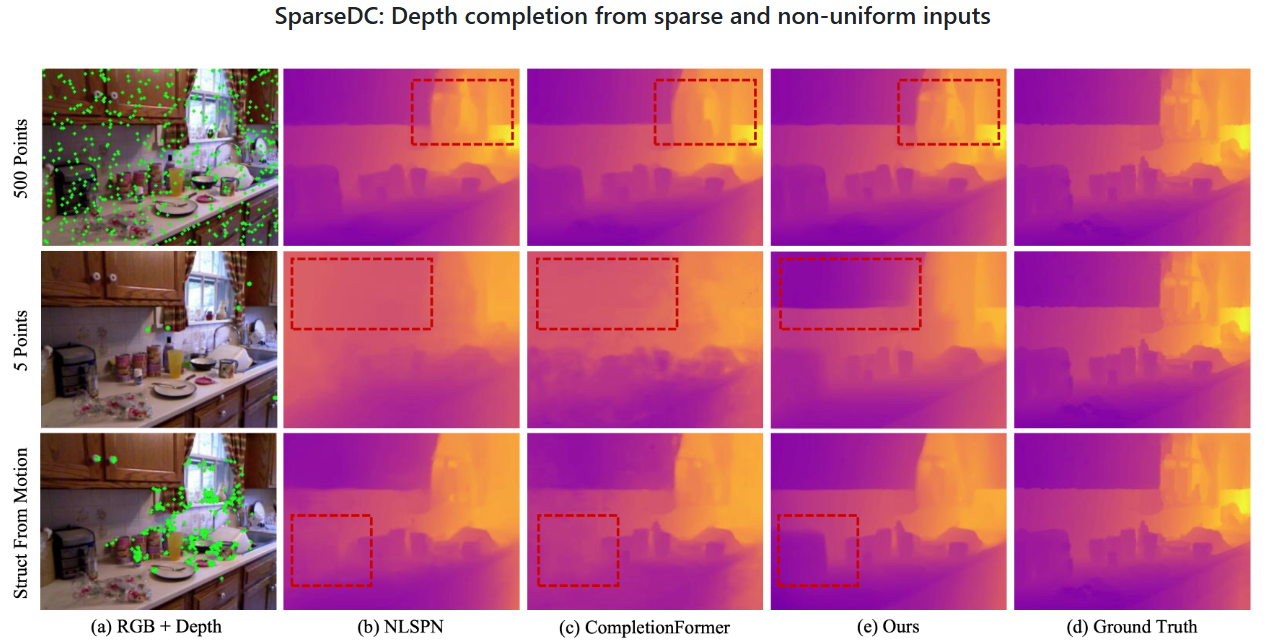

SparseDC: Depth Completion From Sparse and Non-uniform Inputs

Chen Long*, Wenxiao Zhang*, Zhe Chen, Haiping Wang, Yuan Liu, Zhen Cao, Zhen Dong†, Bisheng Yang

Information Fusion (IF: 14.8) 2024

SparseDC is a model for Depth Completion from Sparse and non-uniform inputs. Numerous experiments conducted both indoors and outdoors show how robust and effective the framework is when facing sparse and non-uniform input depths.

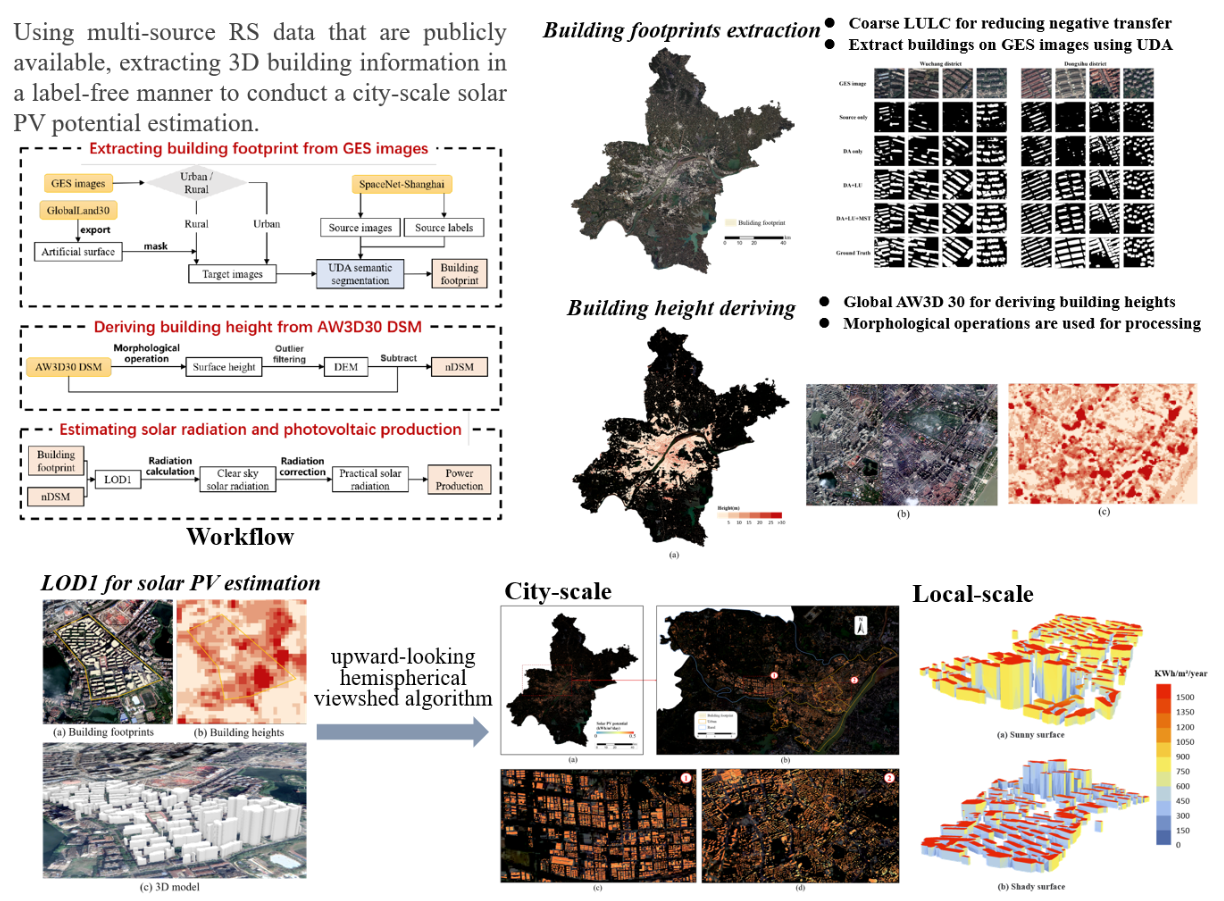

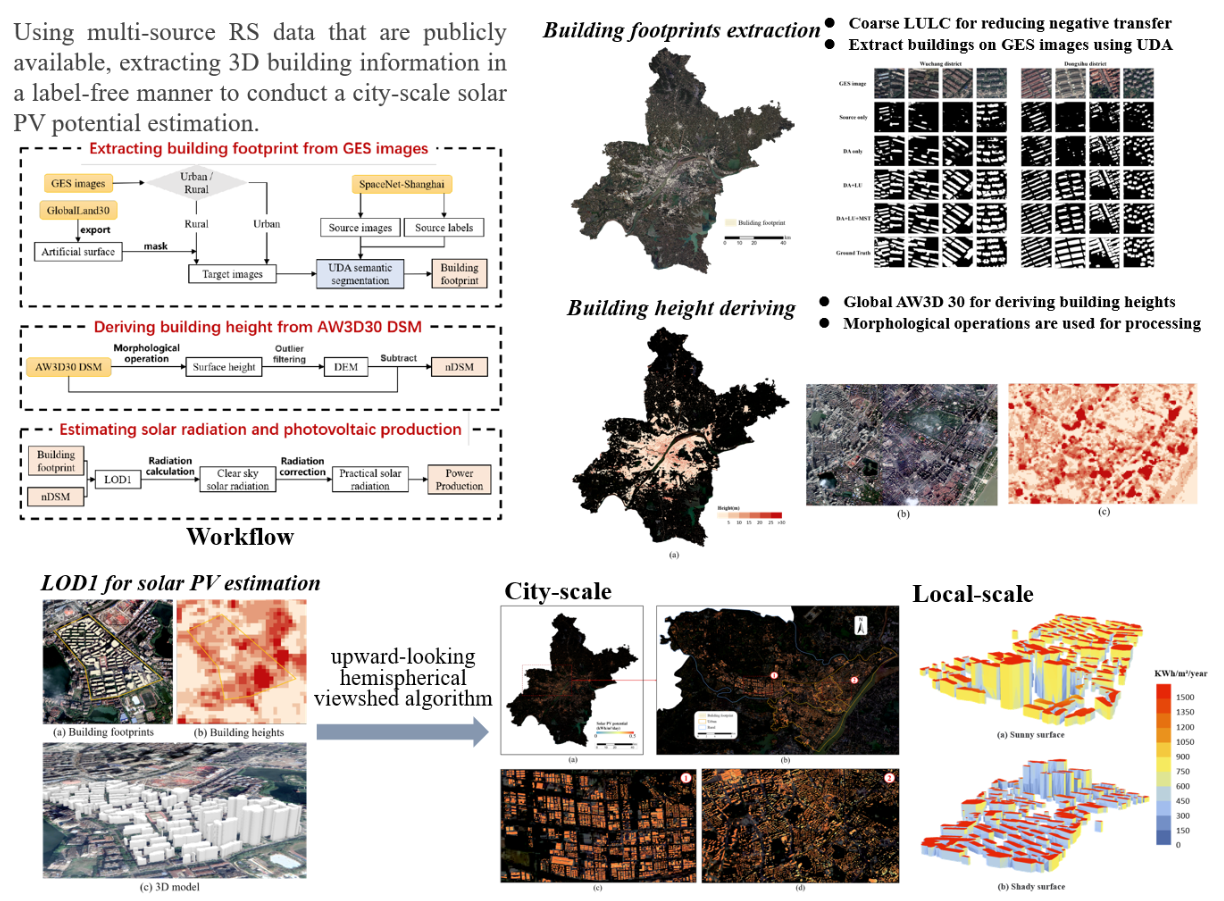

City-scale solar PV potential estimation on 3D buildings using multi-source RS data: A case study in Wuhan, China

Zhe Chen, Bisheng Yang†, Rui Zhu, Zhen Dong

Applied Energy (IF: 10.1) 2024

This study proposes a framework for estimating the solar PV potential of city-level building surfaces without human annotation and data acquisition costs. Buildings are extracted from Google satellite images through multi-space joint optimization domain adaptation network, and LoD1 models are generated by combining global DSM with building footprints. The framework was verified by taking Wuhan city as an example.

2022

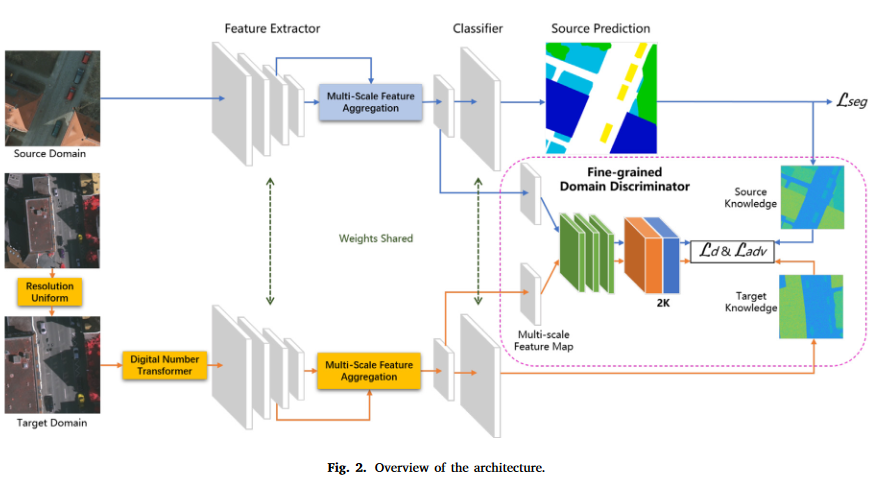

Joint alignment of the distribution in input and feature space for cross-domain aerial image semantic segmentation

Zhe Chen, Bisheng Yang, Ailong Ma, Mingjun Peng, Haiting Li, Tao Chen, Chi Chen†, Zhen Dong

International Journal of Applied Earth Observation and Geoinformation (IF: 7.6) 2022

A framework to jointly align the distribution in input and feature space for cross-domain aerial image semantic segmentation, our method demonstrates excellent performance in various cross-domain scenarios, including the discrepancy in geographic position and the discrepancy in both geographic position and imaging mode.